Thoughts on Debt and Migration

Created by Steven Baltakatei Sandoval on 2025-01-30T17:58+00 under a CC BY-SA 4.0 (🅭🅯🄎4.0) license and last updated on 2025-01-30T17:58+00.

Background

Some thoughts I had waking up when I contemplated the absurdity of deporting undocumented migrants in the United States who work and pay taxes to put their children through school.

Thoughts on Debt and Migration

Thought 1

The whole ethos of capitalism is that we delay the day on which debts must be paid by borrowing more and more from the future, preparing our children to be able to be more and more productive.

Thought 2

Since antiquity, cycles of revenge have long been recognized as problems. Malthusian cycles of boom and bust, plenty and famine, are physically integral memories we wear in the form of fat. Storage that carries us through a long winter or a dry season. Sometimes those stores run out and mothers must rob Peter to pay Paul so their children may have a chance to live.

Thought 3

Religions such as Christianity and Islam are rooted in the concept that cycles of violence may be broken by everyone in the community agreeing to sacrifice a scapegoat so all sore sides of an ongoing conflict may feel vindicated and ceasefires established. Christianity says Jesus should be the scapegoat of last resort, but much of its teachings are about preventive measures to minimize escalation of debts and losses: the concepts of charity from the wealthy and mercy for the poor. But the root driving force for all these ideas is mothers, fathers, and siblings, grieving for their families killed during scarce times.

Thought 4

Only dynasties and lawyers really care about intergenerational debt. Dynasties because debt is their tool for maintaining power over their subjects through taxes (see David Graeberʼs Debt (2014)) and lawyers because they are priests of rule of law, mediators on the boundary layer between imperial statecraft and law-abiding civil order. Just as economics is a dumb calculator for putting whomever you want in power if enough taxpayers believe it, law is a tool to put who you want in power if enough voting military backs it. I donʼt forget that Samuel Coltʼs 1846 six-shooter won the West.

Thought 5

A society that alienates the workers that hold it up will fall. If they grow food, pay taxes, and raise children socialized in your schools, they are de facto citizens. A government fails when it cannot keep up with this fact.

Thought 6

Cycles of violence propel many into the US seeking opportunity to work. Many are raised in Christianity which teaches that the tools for breaking cycles of violence are sacrificing a scapegoat or receiving mercy from their creditors. By sacrificing and abandoning their homes instead of fighting often corrupt creditors, they are peacemakers seeking to break the cycles of violence by making themselves the sacrificial scapegoat.

Thought 7

The climate crisis is a cumulative reckoning of the externalities of burning fossil fuels that cannot be deferred except through terraforming, a process only envisaged in science fiction. Imaginary nation-state borders will not halt the mass migration of people fleeing deadly heat waves, sea level rise, and new severe weather patterns. Todayʼs “economic migration” will be a drop in the proverbial bucket. Do not expect walls and intimidation to hold back this tide.

Thought 8

Problems may be solved by tax-paying people, including the problems created by having more tax-paying people; whether they have a birth certificate or not is a formality so long as their taxes pay for the public services and infrastructure they use. If the economic benefit of tax-paying migrants does not convince people to welcome them, then the hosts likely have an ethnic nationalism mindset, a precursor to Fascism. In other words, one culture seeks dominance over other cultures rather than choosing to adapt even for its own economic benefit.

Conclusion

No real conclusion here except the obvious: the US should improve how they process migrants. Tax-paying migrants are aware of cycles of violence and, by leaving political violence and scarcity, are actively avoiding them and seeking peace. To claim that such migrants are “invading” the US is absurd; ethnic nationalism is sufficient to explain the contradiction.

Glactic Median Citizen

Created by Steven Baltakatei Sandoval on 2025-01-27T22:59+ZZ under a CC BY-SA 4.0 (🅭🅯🄎4.0) license and last updated on 2025-01-27T22:59+ZZ.

Summary

Below is a stream-of-consciousness essay I wrote after hearing a friend disilusioned with their work in helping develop smartphone technology.

My argument is that the smartphone is likely a very important ingredient for the future well-being of humanity to resist and fix the fascist tendences of current United States politics. However, like the printing press, anything that connects humans more, will unleash potential conflict previously inhibited by lack of information flow. In other words, racism and fascism are the first order results of any new communication tool. See Gutenberg's printing press and religious fervor sparked by Martin Luther. Ultimately, the printing press was a necessary ingredient for achieving a better society, but there was an ugly transition period required for societies to adjust. We're in such an adjustment period now.

Introduction

The productive reprieves we occasionally experience when people act en masse for their own collective benefit belie the stupidity of people.

Sometimes these tastes of rationality occur after revolutions or rarely when a benevolent king abdicates power. However, the forcing function of civilization is always the intellectual capacity of the median citizen.

Or is it? Anthropologists say that hunter gatherers were polymath artisans. They had to be in order to craft tools in a world without infrastructure. Are archeologists simply not able to find ephemeral networks of ancient equivalents to today's postal workers? Even the most erudite and handy 21st century person requires enormous amounts of resources to craft a computer chip; some video streamers successfully do so as a hobby but never for much profit. No, computer chips are made at-scale by groups of highly specialized people in semiconductor fabs, relying on transportation network, legal, and financial systems to facilitate the trade. Ordinary people simply buy tools with money, which is it's own can of worms.

But getting back to the question: is it really the intellect and crafting ability of the median person that defines the upward or downward course of civilization in some cumulative happiness space? Even the act of defining the space's metric as “cumulative happiness” sounds sketchy. But the hangnail dangles: how can we extend those brief moments of monopolists turning philanthropist, of people pursuing art and science rather than power, of exploration without conquest? Yes, I'm talking public libraries, school lunches, Broadway, and space probes.

Missing ingredient

Perhaps there is some missing organ. Some brain chemical of empathy that Homo sapiens have yet to develop that inhibits collective action. The macrobiological equivalent of what converts unicellular singletons into biofilms into microbial mats into cylindrical sea sponges into people that launch space probes into galaxy trekking scientists searching for anything more interesting than the Big Bang. Maybe it isn't a brain chemical but some material-agnostic architecture of neural network data filtration that simply isn't viable with our meat brains. Maybe raising a galactic citizen from Homo sapiens stock is like trying to build a skyscraper with wet sand. I suspect this is the case, but I doubt such a cognitive-social disruption will come sooner by simply sitting back and letting the currently fashionable oligarch-in-charge say “Let some rando create this transhuman ubermensch Frankenstein and if they prove profitable and exploitable, well buy their startup out and install our own subroutines into their kind and ride this Akira-class Leviathan to the stars.”. The kind of person I want to build that will value exploration over conquest and science over influence must be architecturally robust enough to survive attempts at corruption by mayfly exploiters. Put them in a den of hungry wolves and they'll convince them to build bridges, use protein printers instead of eating sheep, and lower their Gini coefficient.

Whatever the answer is, it must be found or all these thoughts and people will quickly become squiggles in mildly radioactive sandstone when the rollercoaster ride through happiness space dips below the medieval threshold, even momentarily. It's a bit ironic that the most enduring gestures of the Roman Empire is the Nazi salute, itself a product of fascist Italian longing for a lost golden age for centuries until the Renaissance. The knowledge of how Rome fell is obscured due to data loss caused by the same fall, but if we could track the daily life of a median citizen in detail, I think we could, from our future perspective, see echoes and analogues of demagogues and charlatans from our time. Surely a robust history recording mechanism should be an integral part of the transhuman galactic citizen.

By the way, is this pining for a transhuman superhero racist? Eugenical? Do I have such a low opinion of the median US citizen to not expect them to become more than a geologic film of microplastics footnoted in textbooks as “Anthropocene”? Honestly? Yes. Does that make me a misanthrope? No!

Generational improvement

“Children should strive to be better than their parents.” That “should” carriers a lot of philosophical baggage, but the sentiment, I think, is a popular unspoken one, albeït evolutionarily enforced; babies do not simply grow on trees and children are indistinguishable from suicidal drinks until they can vote; possibly beyond, now that I think about it. But, nevertheless, as the author of this prose preparing to toss it into the Commons, I think we, as the current generation of Earth citizens owe it to the next seven generations to invent the tools they'll need to improve themselves. Did sailors spooling out trans-Atlantic telegraph cables have a similar sentiment? Do fiberoptic technicians feel moral satisfaction at connecting rural communities? I hope so, but I've never read any accounts. Microblogging such ideas is such a niche hobby.

So, what kind of groundwork should we lay for the transhuman hero? I omit the “super” since I'm concerned with median people, not outlier geniuses that I believe are mostly Gilderoy Lockhart salesmen anyway. Wikipedia and Creative Commons covers History. Debian GNU/Linux and it's use of the Free Software Foundation's GPLv3 license covers Software which History today runs on. ActivityPub protocols like Mastodon and Lemmy cover social Media. RISC-V is a start for lowering the barrier to improving computing. Solar panels are the obvious choice for decentralized energy production resilient from centralized manipulation. The IETF and W3C covers data protocols, although the boring parts of physical infrastructure and hardware implementation need attention. As far as governance, a mix of Modern Monetary Theory, socialism, and environmentalism, driven by decentralized money (See Ministry for the Future (2020) by Kim Stanley Robinson) is the most equitable mixture I can imagine.

As for changing up the baseline Homo sapiens body plan, the most realistic augmentation I can think is already underway with the smartphone. If artificial augmentations to human senses make them a cyborg, then prescription glasses make those that use them transhuman to some degree. It would be nice if I could sit on my laurels and say “Smartphones exist! The galactic citizen is already here!” similar to how someone from 1890 could point at a steam locomotive and declare “Steam power exists! Prosperity from global trade is here!”. But smartphones didn't prevent Donald Trump from becoming elected. In the short term, they helped rally the median US citizen, which the 2024 Presidential election proved is okay with burning the government down with fascism so long as it makes their gut feel good. Likewise, the invention of steam engines did not prevent Standard Oil from monopolizing fossil fuel energy in the US. Anti-trust legislature from elected representatives later rectified that, but only after the damage was done and, even then, only temporarily until the Reaganomics of the 1980s. Should I be thinking more along the lines of Neuralink, the closest popular approximation of the EyePhone of Futurama? Again, I'm more concerned with the median citizen, not techbro toys. Paper ballots, newspapers, books, and guillotines were sufficient for the French Revolution. What I'm looking for is more along the lines of Gutenberg's printing press rather than Samuel Colt's 1846 six shooter. Perhaps a pocket neural net / personal knowledge database issued to everyone in elementary school to help them integrate their learnings and observations? Again, that sounds like a smartphone. Maybe “universal cultural integrator”, the secret sauce I'm looking for, will become a standard function next to “stopwatch”, “pocket calculator”, and “flashlight”. Like, a sapient talking embodiment of Wikipedia or, more palatably, a shadow of yourself that you treat as an extension of yourself much like how mitochondria are integral parts of eukaryotic cells.

Smartphones to resist corruption

Of course, the smartphone is still very much a toy of privilege built using rare earth metals mined with exploited labor and burdened with proprietary licenses. The printing press facilitated the Enlightenment by lowering the cost of acquiring information but also lowered the cost of religious propaganda leading to violent ideological conflicts. The potential for conflict existed before the printing press; its advent arguably unleashed it. Similarly, smartphones lower the cost of information transfer and unleash existing potential for conflict; the reëlection of Donald Trump and his Maga movement are examples of this: wealthy monopolists and upper middle class sycophants pay for and spread propaganda cementing fascist power in election results by convincing the median voter that a strong man mob boss isn't so bad. Facebook notifications, supercomputers. Twitter centralized control of social media data flows in data centers, offering a turnkey propaganda machine ready to exploit by the likes of billionaire Elon Musk who did buy it in 2022 and used it in 2024 to promote fellow billionaire Donald Trump. So, even if, long-term, smartphones are the key ingredient for a galactic median citizen, they will unleash unrest and trigger conflicts as they connect racists with racists who suddenly get much entertainment and satisfaction from swinging around their unrealized collective power.

Conclusion

In summary, given the cyclical nature of politics, one should not assume the high points of societal satisfaction are the norm. Instead, analyze the median citizen and their capacity for empathy. Communication tools like printing presses and smartphones help improve the median citizen's ability to overcome barriers to transmit information; however, first out the gate are malicous applications reflecting festering pent up conflicts held back until that point by those same barriers. Therefore, initial misuses of communications technology should not discourage developers from improving the technology.

FreedomBox Mediawiki Fail2ban filter

Created by Steven Baltakatei Sandoval on 2023-12-28T15:37-08 under a CC BY-SA 4.0 (🅭🅯🄎4.0) license and last updated on 2023-12-31T21:36-08.

Summary

I noticed a significant increase in CPU usage on a public FreedomBox

webserver I run for publishing my notes via a MediaWiki instance at

reboil.com/mediawiki. The high usage was caused by frequent expensive

requests for dyanmically generated special pages. I implemented two

solutions: modifying the server's robots.txt and creating a

fail2ban filter.

Table of Contents

Background

Note that this procedure may likely only work for FreedomBox instances

since FreedomBox itself makes automatic configuration file

changes. For example, fail2ban should already be installed and

maintained by FreedomBox.

FreedomBox is a Debian package that converts the machine it is installed on into a personal cloud server. Specifically, it converts the machine into an Apache server with a webUI interface for installing apps such as WordPress (blog), Mediawiki (wiki), Bepasty (file sharing), Ejabberd (XMPP chat server), Postfix/Dovecot (Email server), OpenVPN, Radicale (calendar and addressbook), among others. See the Manual for details.

All files are created or edited with root

access by a FreedomBox account with administrator privileges by

logging in via ssh and running:

$ sudo su -

#

This article assumes a basic knowledge of GNU/Linux such as logging

into your FreedomBox via ssh, editing text files via the command

line, viewing file contents with cat, running Bash scripts, and

being aware of file ownership issues.

Analysis

I detected the high traffic usage via a journalctl resembling the following:

journalctl

# journalctl --output=short-iso --follow

A more focused command is described in the following Bash script run

as the root user.

#!/bin/bash

journalctl --output=short-iso --follow | \

grep --line-buffered "apache-access" | \

less -S +F

Below are portions of example lines of expensive index.php?

requests.

/mediawiki/index.php?returnto=1770-03-07&returntoquery=redirect%3Dno&title=Speci

/mediawiki/index.php?target=1770-03-22&title=Special%3AWhatLinksHere HTTP/1.1" 4

/mediawiki/index.php?action=history&title=1784-03-15 HTTP/1.1" 200 5025 "-" "Moz

/mediawiki/index.php?returnto=1770-03-07&returntoquery=redirect%3Dno&title=Speci

/mediawiki/index.php?action=history&title=1784-03-15 HTTP/1.1" 200 6494 "-" "Moz

/mediawiki/index.php?action=edit&title=1770-03-08 HTTP/1.1" 200 5031 "-" "Mozill

/mediawiki/index.php?target=1862-04-02&title=Special%3AWhatLinksHere HTTP/1.1" 4

/mediawiki/index.php?action=edit&title=1770-03-08 HTTP/1.1" 200 4911 "-" "Mozill

top

High CPU usage was indicated by the appearance of multiple

php-fpm7.4 processes indicated by the top command, a task manager

available on most Unix-like operating systems such as Debian 12.

dstat

dstat was a system performance monitoring utility that I was fond

of. Although FreedomBox uses a Red Hat version which took over the

dstat namespace, and replaced it with a rewritten version that lacks

the handy --top-cpu option, the following command should still work

to show you relevant CPU information, outputting averaged data in a

line every 60 seconds:

# dstat --time --load --proc --cpu --mem --disk --io --net --sys --vm 60

dool

dool is the python3 compatible fork of dstat that isn't tracked by

Debian but which recreates the dstat behavior I'm used to such as

including the --top-cpu option. You can install it into local user

space via:

# git clone https://github.com/scottchiefbaker/dool.git dool

# cd dool

# ./install.py

You are root, doing a local install

Installing binaries to /usr/bin/

Installing plugins to /usr/share/dool/

Installing manpages to /usr/share/man/man1/

Install complete. Dool installed to /usr/bin/dool

You can then run the command via:

# dool --time --load --proc --cpu --top-cpu --mem --disk --io --net --sys --vm 60

Installing for use by the

Methodology

robots.txt

According to the MediaWiki Manual for robots.txt, requests from

webcrawlers to index.php may be disallowed by adding the following

text to a server's robots.txt file. In my particular FreedomBox

instance, the file is located at /var/www/html/robots.txt. I use the

cat command merely to show the contents and location of the file for

this explanation.

# cat /var/www/html/robots.txt

User-agent: *

Disallow: /mediawiki/index.php?

No restart to the apache2 service should be necessary.

index.php is the main access point for a MediaWiki site. In my

FreedomBox installation, MediaWiki pages are served by default at URLs

omitting index.php. For example, my article on the Moon is served by

default at https://reboil.com/mediawiki/Moon. Notably,

https://reboil.com/mediawiki/index.php?title=Moon also works, but it

will trigger the fail2ban filter described below.

This modification of robots.txt alone is an indirect way to reduce

web crawler requests for dynamically generated MediaWiki pages but it

relies on coöperation from webcrawlers themselves to honor the

Disallow request. The following fail2ban filter is an active

response that bans IP addresses that make repeated requests.

fail2ban filter

Fail2ban is a program used by default with a FreedomBox instance. A generic tutorial for configuring it is available here.

For my instance, I set up the filter by creating two files and running

a systemd command to restart the fail2ban service.

The first file to create sets up a filter for fail2ban that uses a

regular expression to identify requests for index.php. It is a

3-line file named mediawiki.conf saved in /etc/fail2ban/filter.d/.

# cat /etc/fail2ban/filter.d/mediawiki.conf

[Definition]

failregex = <HOST> -.*"GET /mediawiki/index\.php\?.*"

ignoreregex =

The second file is a jail.local which references the

mediawiki.conf file (via the [mediawiki] line) and specifies the

trigger and reset conditions for an IP address ban.

# cat /etc/fail2ban/jail.local

[mediawiki]

enabled = true

port = http,https

filter = mediawiki

logpath = %(apache_error_log)s

maxretry = 60

findtime = 600

bantime = 3600

The logpath line is specific to how FreedomBox configures its logs

when applying fail2ban to other applications. maxretry is the

number of requests within a window of findtime seconds that will

trigger a ban lasting bantime seconds.

In this particular example, a webcrawler requesting 60 or more pages

via my Mediawiki's index.php access point within a 5-minute window

will get a 1-hour ban on its IP address. Most dynamically generated

pages a typicaly human user would use are viewing a page's history or

requesting to edit a page. I find it implausible for a human to make

sixty such requests in five minutes (that's one request per ten

seconds) and so I find these limits rational.

In my instance, I had to create the jail.local file. According to

the Linode tutorial, jail.local is meant to permit a local

administrator to extend and override configurations established in

default .conf files such as fail2ban.conf.

To immediately apply the new fail2ban filter, the service must be

restarted:

# systemctl restart fail2ban.service

Current bans can be viewed via a fail2ban-client command:

# fail2ban-client status mediawiki

Status for the jail: mediawiki

|- Filter

| |- Currently failed: 1

| |- Total failed: 5920

| `- Journal matches:

`- Actions

|- Currently banned: 1

|- Total banned: 51

`- Banned IP list: 47.76.35.19

Relevant system information

- FreedomBox version: 23.6.2

- Operating system: Debian GNU/Linux 11 (bullseye)

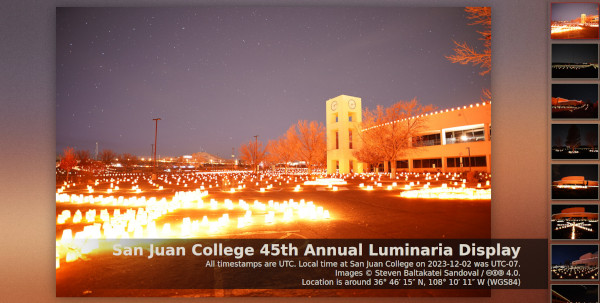

San Juan College 45th Annual Luminaria photos

Created by Steven Baltakatei Sandoval on 2023-12-04T04:51+00 under a CC BY-SA 4.0 (🅭🅯🄎4.0) license and last updated on 2023-12-04T05:37+00.

Summary

On 2023-12-02, I attended the 45th Annual Luminarias display held by San Juan College (SJC). I took some photos which I have uploaded to a static image gallery using fgallery.

Background

Growing up in Farmington, NM, I had considered the holiday luminaria display at SJC to be a routine celebration observed everywhere. It wasnʼt until after I left the area that I realized lighting thousands of candles inside paper bags was a somewhat niche tradition. The Wikimedia Commons category Luminaries in the United States shows some photographs but none of the San Juan College event which has been going on for over 4 decades. At some point I'll upload some photos to Commons but I wanted to get some select photos uploaded to my static resource site https://reboil.com/res/.

2023-10-14 Annular Solar Eclipse

Created by Steven Baltakatei Sandoval on 2023-10-15T09:31+00 under a CC BY-SA 4.0 (🅭🅯🄎4.0) license and last updated on 2023-10-15T10:40+00.

(Image © Steven Baltakatei Sandoval, 🅭🅯🄎4.0)

Summary

I took a photograph of the 2023-10-14 solar eclipse from Vancouver, Washington. I uploaded a copy to Wikimedia Commons here.

Background

I got lucky and managed to get just the right amount of cloudcover to take a photo without any special equipment besides my Sony a7 III with a 70-300mm telephoto lens.

I had known about the eclipse for a few days, having not really tracked it in my notes since it was not a total solar eclipse. However, some family members let me know it was happening and I noticed I wouldn't have to travel very far from my Vancouver, Washington, dwelling to see a significant occultation. I saw that it was scheduled to be viewable at my local morning around 2023-10-14T09:00-07. I prepared my eclipse glasses the night before.

So, when I woke up on the day of the eclipse, despite being somewhat disappointed at seeing an overcast sky, I went outside a few minutes before maximum occlusion. I found my roommates were already outside looking to the southeast at a bright spot in the clouds where the sun was. The morning clouds were clearing. I saw that some variation in cloudcover would allow me to see the eclipse without any glasses. I also saw that it was possible for me to use the cloud cover to take a photo with my camera. I quickly retrieved my camera and snapped a few photos when a dark cloud passed by. The result allowed me to clearly see the crescent shape of the partial solar eclipse.

I then downloaded my photos to a computer, cropped one of the better images, then uploaded it to my Mastodon account, Wikimedia Commons, and my website. Some commenters said the image looked like it could be an album cover; I told them anyone was free to use it under the CC BY-SA 4.0 license.

To be honest, there are many higher quality photographs than mine. In particular, I like this set by a Ross A. Whitley, taken from the San Jose Mission) in San Antonio, Texas.

(Image © Ross A. Whitley, 🅭🅮1.0)

References

- Ross A. Whitley. (2023-10-14). “Annular Eclipse 2023”. Flickr. Accessed 2023-10-14.

- Steven Baltakatei Sandoval. (2023-10-14). “2023-10-14 solar eclipse from Vancouver, WA”. Wikimedia Commons. Accessed (2023-10-14).

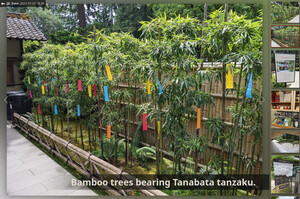

fgallery example

Created by Steven Baltakatei Sandoval on 2023-07-07T01:40+00 under a CC BY-SA 4.0 (🅭🅯🄎4.0) license and last updated on 2023-07-07T03:41+00.

Summary

I wanted to test out a static HTML+Javascript photo gallery package I found on Debian to answer a question on a Lemmy thread about self-hosted photo galleries. The end result was this example gallery.

Setup procedure

On a Debian 11 system, the following commands may be used to create an

fgallery image gallery.

Install fgallery

$ sudo apt update;

$ sudo apt install fgallery;

Install optional tools

$ sudo apt install jpegoptim pngcrush 7zip;

Build a gallery from photos in photo-src/.

#!/bin/bash

din=./photo-src ;

dout=./test4 ;

title="Tanabata at Portland Japanese Gardens, July 2022 - Steven Baltakatei Sandoval";

fgallery -c txt -s -j4 --index="https://reboil.com/mediawiki/2022-07-07" \

"$din" "$dout" \

"$title" && \

python3 -m http.server -d "$dout";

The input directory path is set in din, the output directory path is

set in dout.

The title of the webUI page is set in title.

The -c txt option tells fgallery to look for captions for an image

in a text file named exactly the same as the image file except that

the final extension (e.g. .jpg of photo-src/foo.jpg) is replaced

with .txt (e.g. photo-src/foo.txt).

The -s option prevents the original image files from being included

as an archive in the output and disables downloading of such an

archive.

The -j4 option specifies that up to 4 image processing jobs may be

performed in parallel in order to speed up creation of the output

directory contents if at least 4 CPUs are available.

The --index option specifies the URL for the "Back" button at the

top left of the gallery webUI.

The && specifies the next command python3 should only run if the

fgallery command does not fail.

The \ at the end of some lines tells bash to ignore the newline

(so longer commands in the script are broken up to be more readable).

The python3 -m http.server -d "$dout" command creates a simple local

HTTP server at the address http://localhost:8000 so a web browser

can view the webUI without there being a need to upload anything to

any remote server.

References

Migration to Lemmy

Created by Steven Baltakatei Sandoval on 2023-06-15T10:05+00 under a CC BY-SA 4.0 (🅭🅯🄎4.0) license and last updated on 2023-06-15T18:27+00.

On 2023-06-12, after I noticed a surge of moderators setting major subreddits private, I decided to explore federated alternatives to Reddit which I had heard existed. After some searches, I found mentions of Lemmy and Kbin.

I checked through a list of Lemmy instances and created a spreadsheet factoring in admin age, uptime, and domain name length. I used that to generate a short list to let me review the post history of admins. After this review, I decided that the Lemmy instance sopuli.xyz was the most appropriate for me; I was looking for a smaller instance with at least some months of history. Sopuli.xyz seems to have been started by a Finn who set rules against posts about QAnon, Nazis, and other bigoted groups I don't want to associate myself with. So, I applied and, as of 2023-06-14,now have an account there.

I now look forward to contributing comments to a more decentralized forum.

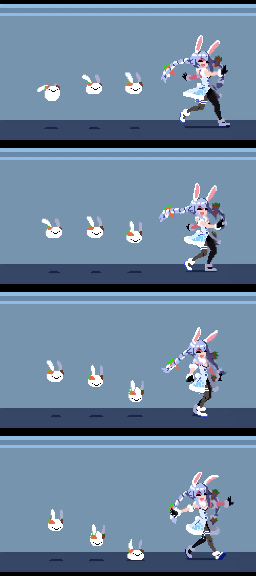

Usada Pekora BGM animation synchronization

Created by Steven Baltakatei Sandoval on 2023-06-09T21:35+00 under a CC BY-SA 4.0 (🅭🅯🄎4.0) license and last updated on 2023-06-09T23:14+00.

Summary

I got annoyed at the lack of a properly synchronized animation of a Pekora Usada walking animation on YouTube, so I created one. I wrote a Bash script to assemble frames generated by FFmpeg which I then imported into Kdenlive in order to combine with an audio loop edited in Audacity. It took a fair amount of work so I thought I'd write up what I did in TeXmacs.

Links

- YouTube

- Git repository (Git bundle)

- Write-up (PDF)

- Wiki entry.

Meta

This was project BK-2023-04.

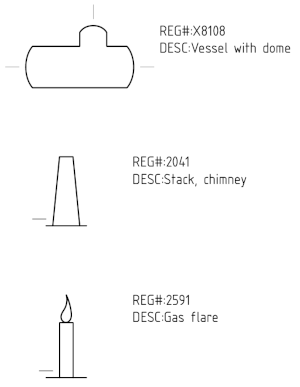

ISO 10628 symbol drawing update

Created by Steven Baltakatei Sandoval on 2023-05-24T07:30+00 under a CC BY-SA 4.0 (🅭🅯🄎4.0) license and last updated on 2023-05-31T19:55+00.

Edit(2023-05-31):Add reboil.com wiki links.

Summary

On 2023-05-23, I updated a drawing I made in 2020-09 to serve as a palette for chemical engineering symbols I might use when constructing my own P&IDs.

Background

Back in 2020-09, I became interested in creating P&ID diagrams for use

in my personal notes and in the DeVoe's Thermodynamics and Chemistry

transcription project of mine (BK-2021-07). I decided to find an

industry consensus standard set of symbols so that my drawings (which

I planned to license CC BY-SA 4.0 for use in Wikipedia and Wikimedia

Commons) could be used by other people. Therefore, I purchased a copy

of ISO 10628-2:2012 and manually drew each symbol in Inkscape.

On 2020-09-25, I uploaded a set of drawings showing all the ISO

10628-2:2012 symbols to Wikimedia Commons (See BK-2020-04-PID-1-SHT1

SVG file). I split the upload into several separate SVG files, due to

lack of multi-page support for SVGs in Inkscape. I also uploaded a set

of PDF files exported from Inkscape since the ISO 3098 font I chose

for the drawing (osifont in the Debian repository) wasn't supported by

Wikimedia Commons.

In 2020-02, I received an email from a John Kunicek about the symbol

numbering system used in ISO 10628 symbols I drew in

BK-2020-04-PID-1. They also informed me of some spelling errors. On

2023-02-27, I reviewed Kunicek's questions and came to the conclusion

that basically the symbol numbers in ISO 10628 followed a pattern

established in another ISO standard called ISO 14617 of which ISO

14617-1:2005 is an index of registration numbers for symbols used in

other ISO standards such as ISO 10628. On 2023-02-28 I sent my reply

to Kunicek and updated the BK-2020-04-PID-1 legends to address the

ambiguities of the original legend that was limited to what

information was provided in my copy of ISO 10628:2012.

On 2020-03-01, while I had the files on my mind, I also decided to

edit the SVG files of BK-2020-04-PID-1 so that each symbol and its

associated text objects containing ISO 10628 descriptions and

registration numbers were grouped together; this update would enable

someone to easily copy and paste individual symbols if they edited the

drawing in Inkscape or, if a machine were performing a text search of

the body of the SVG file itself, they could quickly find the

registration numbers to help them identify the associated symbols

nearby in the XML tree of the SVG file. Previously, the symbol objects

and text objects were mixed together in a hodgepodge; symbols and

their associated text objects were only obviously related to a human

looking at the rendered image. I didn't push the updated SVG files

since I wanted to wait and see if any more corrections or questions

came from Kunicek or others.

On 2021-05-08, Wikipedia editor Lonaowna added thumbnails of the SVGs I uploaded to the ISO 10628 Wikipedia article. I myself hadn't wanted to do so since I felt it would have been a conflict of interest and rather self-promotional to push edits containing links of my self-published works.

On 2023-05-23, I decided to go ahead and upload to Wikimedia Commons

the updates of the BK-2020-04-PID-1 SVG files containing the

corrections and clarifications I applied in 2020-02/2020-03. I also

made some adjustments to text placement since, annoyingly, Wikimedia

Commons doesn't have an ISO 3098 compliant font for use in technical

drawing SVG files; PNG previews of the uploaded SVGs showed text

converted into a generic sans serif font that is 25% wider than that

used by osifont. After some adjustments and reuploads, I ended up

with satisfying SVG and PDF versions uploaded to Wikimedia

Commons. Again, here is a link to the first sheet of

BK-2020-04-PID-1; the description contains links to the other PDFs

and SVGs for all the sheets.

Motivation

A significant amount of legwork is involved in creating a reference

drawing when none meeting your criteria exists already. My criteria is

that works I publish should be compatible with a Creative Commons

BY-SA 4.0 license; creating BK-2020-04-PID-1, a palette of ISO 10628

symbols, was part of that process for me. I hope that the work I put

in helps save other people time which they can dedicate to leisure. I

believe leisurely people are the most capable of creative works. I

would prefer to live in a world where projects such as Wikipedia and

Wikimedia Commons can expand in scope to cover specialized

disciplines, saving people time from reinventing wheels.

Even if my uploaded work is hoovered up by an AI and spit out mixed with others, my carefully crafted drawings will remain causally upstream of the process; I think AI language models such as ChatGPT will lubricate on-demand knowledge downloads for the public; the quality of those downloads is dependent upon the quality of the data set the language models are trained on. I'm okay with this process and hope to find other like-minded people who are willing to make a living making such contributions to common knowledge without the politics of worrying about using copyright to protect trade secrets.

Diné Bizaad Bínáhooʼaah Notes

Created by Steven Baltakatei Sandoval on 2023-02-01T09:31+00 under a CC BY-SA 4.0 license and last updated on 2023-05-31T19:10+00.

Edit(2023-05-31):Add reboil.com wiki links.

Background

In 2023-01, I decided to purchase a copy of "Diné Bizaad Bínáhooʼaah = Rediscovering the Navajo Language" to aid me in my studies of the Navajo language. I had tried out the Navajo lessons of Duolingo and found them problematic when it came to anything more complex than memorizing vocabulary (especially regarding verb conjugations).

So, as I read through it, I will record notes on this web page that I think other readers may find useful.

Stats

- Title: Diné Bizaad Bínáhooʼaah = Rediscovering the Navajo language : an introduction to the Navajo language

- Authors:

- Evangeline Parsons Yazzie

- Margaret Speas

- Editors:

- Jessie Ruffenach

- Berlyn Yazzie (Navajo)

- ISBN: 978-1-893354-73-9

- OCLC: 156845819

- Edition: 1st

- Printing: 3rd

- Publisher: Salina Bookshelf, Inc.

- Location: Flagstaff, Arizona

By page

Page xvii

The following hyperlink:

http://www.swarthmore.edu/SocSci/ifernal1/nla/halearch/halearch.htm

is not valid as of 2023-02-01. Searching pages under the

swarthmore.edu domain yields this page which likely contains the

material referenced (i.e. "If you are not sure how this can be done

for Navajo, we suggest that you consult the materials on Situational

Navajo, by Wayne Holm, Irene Silentman and Laura Wallace, available

for download…"):

https://fernald.domains.swarthmore.edu/nla/halearch/halearch.htm

This page and one level of outlinks has been saved via the Internet Archive here.

Page 3

The consonant ʼ

The glyph used in the text to encode the consonant named "glottal stop" appears to be the glyph that is MODIFIER LETTER APOSTROPHE (U+02BC) or RIGHT SINGLE QUOTATION MARK (U+2019) in Unicode.

However, due to widespread input method limitations, the ASCII character APOSTROPHE (U+0027) is often used instead.

The text addresses this:

You probably wonder why an apostrophe has been added to the list above. The letter that looks like an apostrphe is called a glottal stop. A glottal stop is a consonant. We will talk about the glottal stop in the section below on consonants.

In Navajo, the glottal stop is a consonant in the same class as

korxwhich each have their own dedicated glyphs. A rational typesetter would not use MULTIPLICATION SIGN (U+00D7) (×) instead of LATIN SMALL LETTER X (U+0078) (x) even though both use similar glyphs.So, the question arises of whether to use MODIFIER LETTER APOSTROPHE (U+02BC) or RIGHT SINGLE QUOTATION MARK (U+2019).

Regarding the difference, the Unicode Standard 15.0 (PDF) has this to say in its General Punctuation section of Writing Systems and Punctuation:

Apostrophes

U+0027 apostrophe is the most commonly used character for apostrophe. For historical reasons, U+0027 is a particularly overloaded character. In ASCII, it is used to represent a punctuation mark (such as right single quotation mark, left single quotation mark, apos- trophe punctuation, vertical line, or prime) or a modifier letter (such as apostrophe modi- fier or acute accent). Punctuation marks generally break words; modifier letters generally are considered part of a word.

When text is set, U+2019 right single quotation mark is preferred as apostrophe, but only U+0027 is present on most keyboards. Software commonly offers a facility for auto- matically converting the U+0027 apostrophe to a contextually selected curly quotation glyph. In these systems, a U+0027 in the data stream is always represented as a straight ver- tical line and can never represent a curly apostrophe or a right quotation mark.

Letter Apostrophe. U+02BC modifier letter apostrophe is preferred where the apostrophe is to represent a modifier letter (for example, in transliterations to indicate a glottal stop). In the latter case, it is also referred to as a letter apostrophe.

Punctuation Apostrophe. U+2019 right single quotation mark is preferred where the character is to represent a punctuation mark, as for contractions: “We’ve been here before.” In this latter case, U+2019 is also referred to as a punctuation apostrophe.

An implementation cannot assume that users’ text always adheres to the distinction between these characters. The text may come from different sources, including mapping from other character sets that do not make this distinction between the letter apostrophe and the punctuation apostrophe/right single quotation mark. In that case, all of them will generally be represented by U+2019.

The semantics of U+2019 are therefore context dependent. For example, if surrounded by letters or digits on both sides, it behaves as an in-text punctuation character and does not separate words or lines.

So, according to its standard, the apropriate Unicode character to use for glottal stops in the Navajo language is MODIFIER LETTER APOSTROPHE (U+02BC) (ʼ).

Before 2023-02-02, I've recommended use of RIGHT SINGLE QUOTATION MARK (U+2019) (’) primarily as a means to get away from using the "overloaded" character APOSTROPHE (U+0027) where reasonable. However, going forward, I'm now recommending U+02BC instead.

Input methods designed for the Navajo language should dedicate an entire key to MODIFIER LETTER APOSTROPHE (U+02BC) (ʼ) as it would for the ASCII letter LATIN SMALL LETTER K (U+006B) (k).

In summary,

ʼis the glottal stop consonant, not'.

See Also

Wikipedia articles exist for the authors:

This blog is powered by ikiwiki.

Copyright © 2020—2025 Steven Baltakatei Sandoval (PGP: 0xA0A295ABDC3469C9). Text is available under the Creative Commons Attribution-ShareAlike license (🅭🅯🄎4.0); additional terms may apply.